We work on a variety of topics related the perception of natural stimuli, which we study with electro-encephalography (EEG). We are also trying to understand basic mechanisms of transcranial electric stimulation (TES) on learning, as well as develop tools for targeting TES. We also have projects on deep-learning to analyze MRI images.

Neural processing of natural stimuli

We are interested in how people process natural stimuli and use EEG to measure stimulus-evoked neural activity. We also collect eye movements, pupil dilation, and heart rate, as they are remarkably connected to our minds. In our experience, small changes in the task can have large effects on the resulting brain signals. We therefore emphasize the most natural setting possible. Video and audio narratives provide a good balance between reproducibility and natural dynamics. We have also worked with music and video games, including virtual reality (VR) environments. The workhorse for the analysis of these natural stimuli has been inter-subject correlation (ISC) which does not require any kind of labeling of the stimulus. We also temporal response functions (TRFs), which do require labeling, but provide clear interpretations of how different stimuli and behaviors relate to neural activity.

Current projects:

- Attention in online educational videos: Jens Madsen

- Attention to speech: Ivan Iotzov and Jens Madsen

- Synchronization of physiological and behavioral signals: Jens Madsen

- Synchronization of neural acivity to music: Jens Madsen

- Semantic novelty during natural vision: Vinay Raghavan

- Movement and navigation in virtual reality: Vinay Raghavan

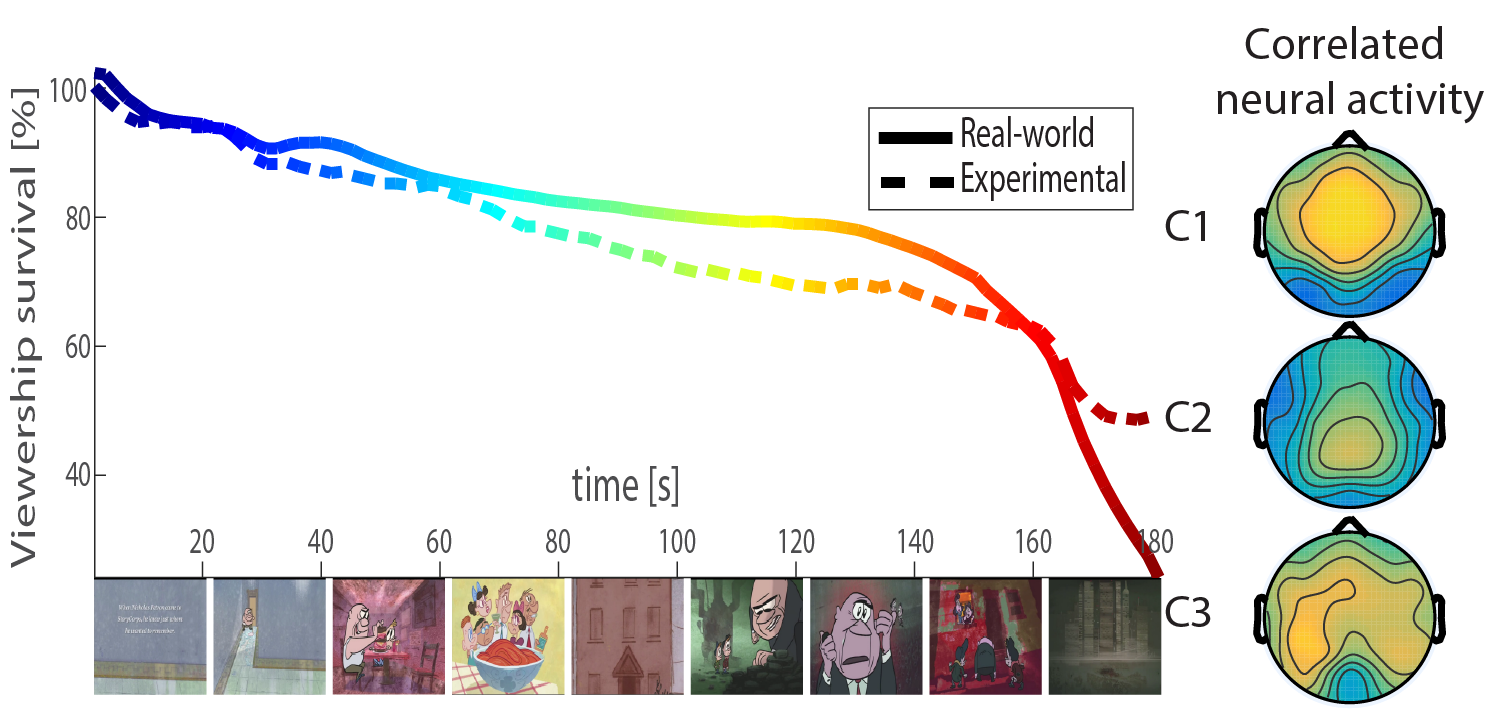

ISC of EEG appears to be a marker of engagement with a video stimulus. It is therefore predictive of the behavior of large audiences, including tweeting, viewership size and preference ratings. Engaging narratives synchronize not just our brains, but also our perception of time.

Attention and memory for narratives | Ki et al. 2016 | Cohen et al. 2016 | Cohen and Madsen et al. 2018ISC of EEG is dramatically modulated by attention.

Video games | Dmochowski et al. 2016For the case of unique experience we find that correlation with the actual stimulus also captures attention. Surprisingly, we find a strong coupling of the stimulus with a supramodal component of the EEG.

Videos in the classroom | Poulsen et al. 2016ISC of EEG during videos can be measured simultansously in the classroom.

Synchronized eye movements | Burleson-Lesser et al. 2017 | Madsen et al. 2021Synchronized eye movements are modulated by attention and can predict test scores of student in online video education. Attention can be measured remotely using standard webcameras without the need to transmit any personal information, thus preserving privacy. See video exampes

Synchronized heart rate | Perez and Madsen et al. 2020Narratives synchronize heart rate between individuals and could be a predictor of consciousness.

Engagement with music | Madsen et al. 2019ISC of EEG to people listening to music is modulated by attention and is influenced by peoples knowledge about the music and their musical training. See video exampes

Multimodal synchrony with video | Madsen et al. 2022Cognitive processing of a common stimulus synchronizes brains, hearts, and eye.

Natural reading | Raghavan & Parra, 2025As readers move their eyes across the page, they primarily process just the word they are fixated upon. However, we note two key exceptions: 1. Speech-related processing of words behind fixation, potentially relating to the inner voice, and 2. Contextual processing of words to the left and right of fixation, which is associated with slower reading speeds.

Semantic novelty | Nentwich et al. 2023 | Raghavan et al., 2026The brain shows evidence of integrating high-level "semantic" inforation across fixations.

Movement and navigation in virtual reality | Project PageHow do individuals coordinate head, eye, and body movement during exploration in VR? How do spatial boundaries influence movements and neural activity during navigation in VR?

Mechanisms of TES

Current projects:

- Motor sequence learning in Human: Gavin Hsu

- Learning to reach in rodents: Forouzan Farahani

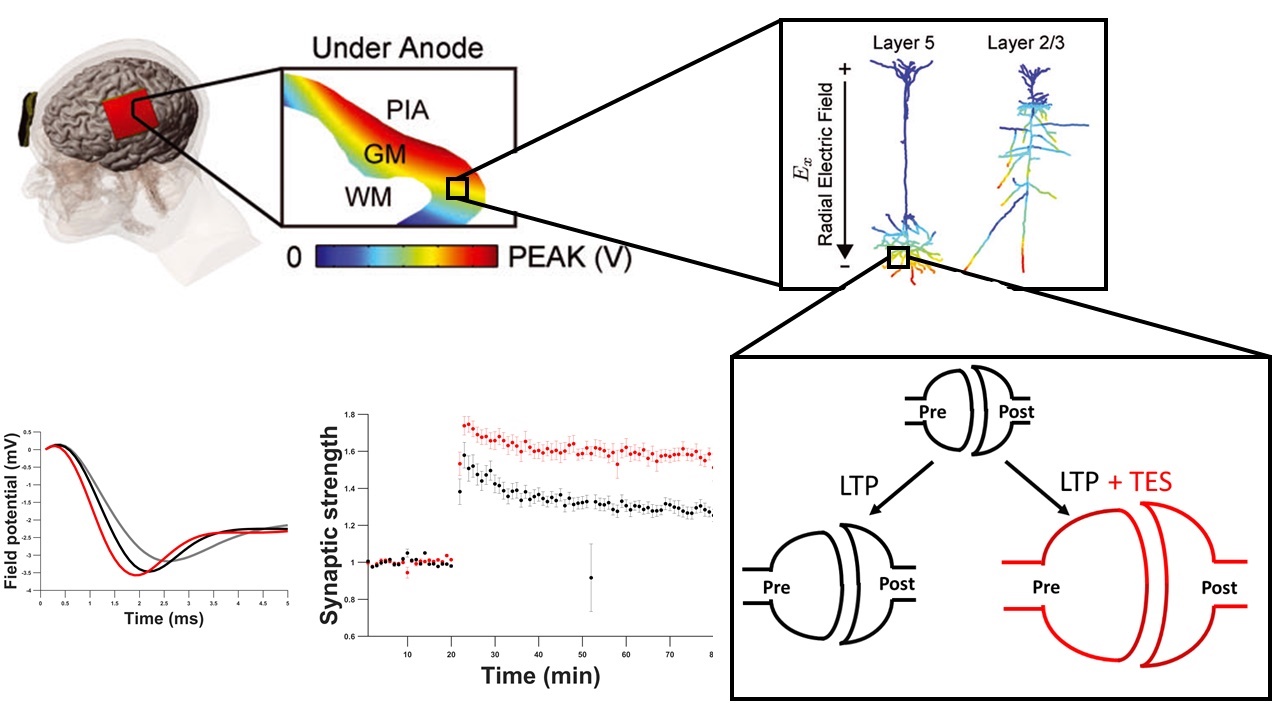

Acute effects of tACS | Reato et al. 2013

Long-term effects of tDCS | Reato et al. 2015 | Kronberg et al. 2017 | Kronberg et al. 2019

Effects of tDCS on motor learning | Hsu et al. 2022

Targeting of TES with electrode arrays

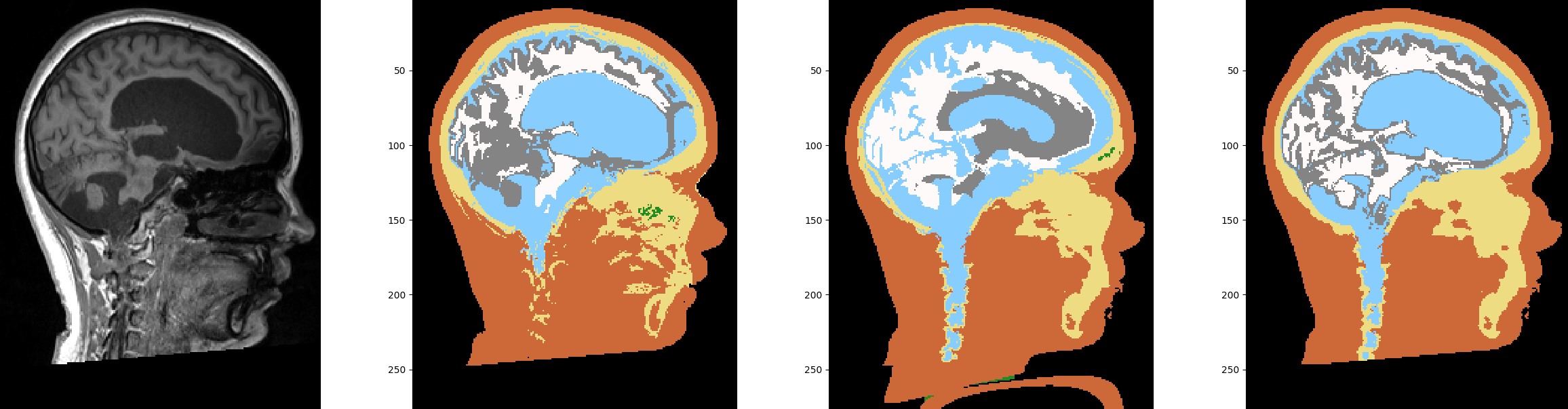

TES is applied often using simple sponge electrodes. We have advocated the use "high-definition" stimulation using arrays of small electrodes (comparable to what is done with EEG). To this end we have developed methods to steer currents with these arrays, as well as build individualized head models in particular to account for altered anatomies in stroke patients. We have been the first to thoroughly validate the corresponding current-flow models in human.

Current projects:

- Multifocal targeting: Yu (Andy) Huang

- ROAST: Yu (Andy) Huang

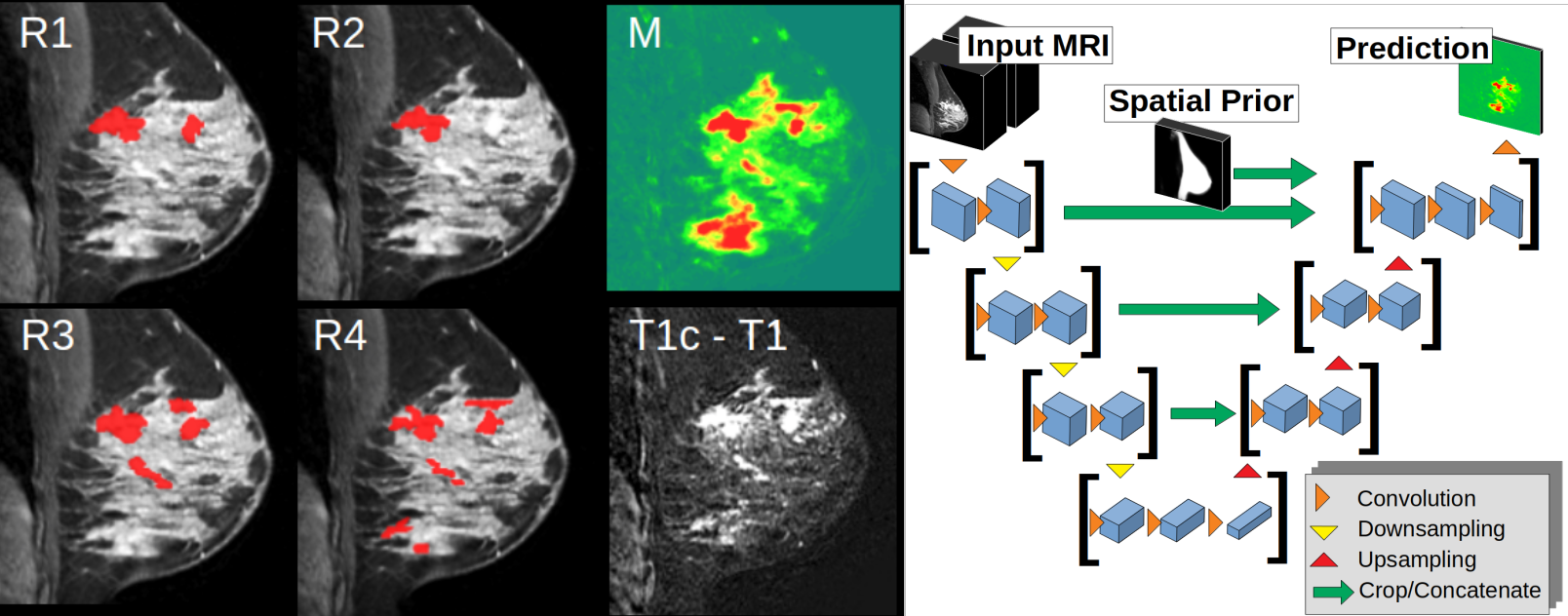

Deep-learning for MRI

We developed deep-learning architectures to analyze 3D images, specifically for brain segmentation, and breast tumor segmentation. Head and brain segmentation is of particular interest because of the current flow-modeling describe above. A collaboration with Memorial Sloan Katering Cancer Center involves projects related to breast and brain MRI.

Current projects:

- Automated brain segmentation in the presence of lesions:

- Breast cancer segmentation